State of symbolic shapes: May 20 edition

Previous update: State of symbolic shapes branch - #55 by ezyang

Executive summary

- PT Compiler/Distributed offsite. PyTorch compiler and distributed had an offsite! Here’s a summary of what went down: https://docs.google.com/document/d/1hYZJx0-y-UOvA3Ulg4kdjRXAuoWEwvNCuffvYd3Yeno/edit?usp=sharing (Meta only). The short version is we’re going to try harder to trace eager FSDP with the PT2 stack, and that this is very, very complicated.

- GNN victory. puririshi98 reports 20% end-to-end speedup on pyg_nvfuser_work/gcn_neighborloader_example.py at main · puririshi98/pyg_nvfuser_work · GitHub, made possible with dynamic shapes, which is a great improvement from his initial report that eager was faster than compile. Nice work all!

- Notable bug fixes. I mostly focused on clearing dynamic shapes related user issues. I wasn’t able to fix very many before traveling to the offsite.

CI skips. -2, -1, 0, -2 (no change).

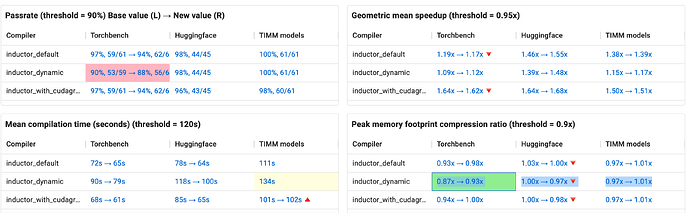

The dashboard (as of 03de158). This week on HUD

| Metric | Torchbench | Huggingface | TIMM models |

|---|---|---|---|

| Passrate | 90%, 53/59 → 88%, 56/64 |

98%, 44/45 | 100%, 61/61 |

| Speedup | 1.09x → 1.12x | 1.39x → 1.48x | 1.15x → 1.17x |

| Comptime | 90s → 79s | 118s → 100s | 134s |

| Memory | 0.87x → 0.93x | 1.00x → 0.97x |

0.97x → 1.01x |

Notes:

- torchbench pass rate change:

- basic_gnn_{edgecnn,gcn,gin,sage} are newly added models which pass out of the box

- vision_maskrcnn was removed from the skip list now that its eager mode is deterministic, but it still not working with dynamic shapes, needs more work

- hf_LongFormer now failing because hf_LongFormer incorrect strides in copy decomp was reverted

- llama now failing because locality reordering in training was disabled (it’s not clear why this affects llama though)

- moco passes because Save global torch state to restore on graph break (but perf is still not working, see Moco failure `TypeError: can't assign a SymInt to a torch.cuda.LongTensor` · Issue #101939 · pytorch/pytorch · GitHub)

- geomean change

- Improvements in torchbench and timm_models (e.g., alexnet, squeezenet1_1 from torchbench) stem from models with previously horrible performance, no longer having horrible performance. Based on discussion with @ngimel, https://github.com/pytorch/pytorch/pull/101602 is likely the reason, as static shapes is incorrectly eliding indexing bound device asserts, but dynamic shapes is not eliding them and they are killing performance. These disproportionately affect image models since those models use upsamplingwhich involve indirect indexing.

- Improvements in huggingface (e.g., DebertaV2 variants) is due to https://github.com/pytorch/pytorch/pull/99483

What’s next?

- Voz: dynamic by default is ready to land!

- Edward: OSS oncall, probably some FSDP tracing stuff